Hello to all,

I am writing to you because I simply cannot find a solution to my problem myself.

I use Dietpi on a Proxmox server in a VM.

Everything was installed and created exactly according to the instructions.

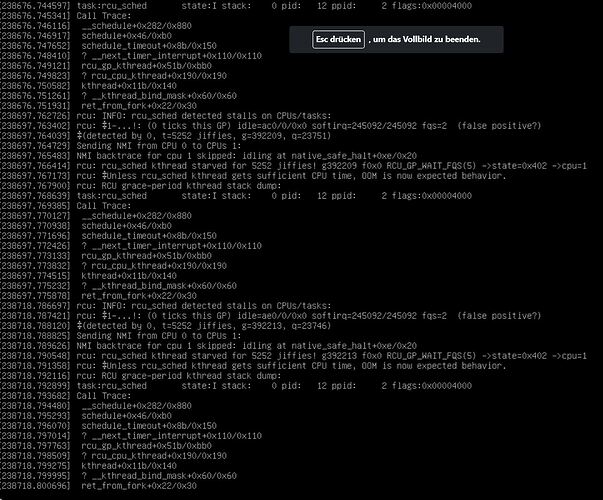

My problem is that after about one to three days DietPi stops working completely and is stuck in a continuous loop. All services such as Docker then stop running and I can only end the VM via the ProxMox console.

After a restart, everything runs perfectly again.

If you look at my screenshot, can you perhaps give me a tip on what I can do about this error?

Many thanks

Bye

Marcus

Looks like you are reaching capacity limits of the VM leading to system crashing.

RAM capacity in particular it seems. Have a look at htop by times to check for available system memory and in case which process is eating it.

Ok, thank you for your messages

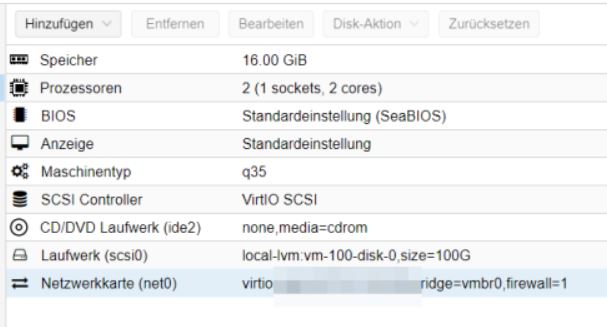

The VM currently has 16 GB of memory.

Among other things, Grafana runs on the DietPi, could that be the problem here?

How exactly do I go about finding out what you have suggested?

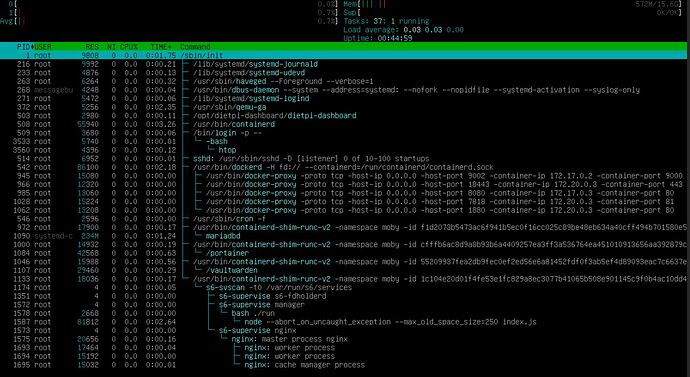

You could watch resource consumption using htop command.

Possibly, also free -m resp. free -h could be an option.

I am using Proxmox VMs extensively and did not detect such problems.

But I have not used Docker in a VM up to now.

I am running docker in a Dietpi VM without any issues.

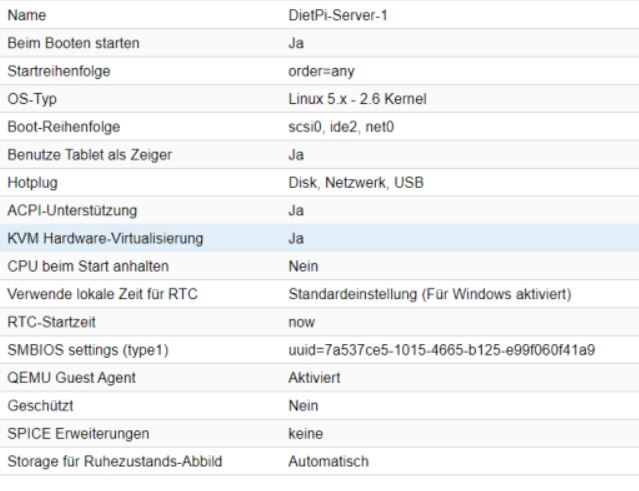

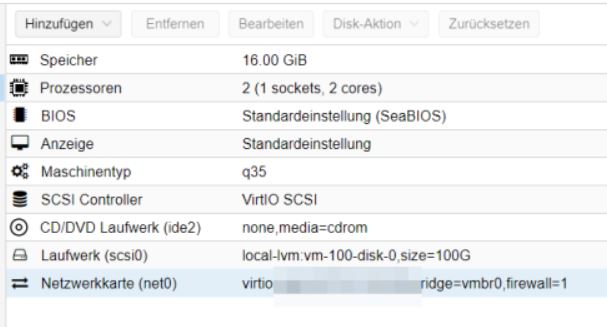

Please let us know the hardware settings of the vm

1 Like

I have put together everything you wanted to know.

Currently, DietPi simply freezes and I can no longer enter a command.

I have now also switched off Grafana. But the system is still very unstable.

Monitore the resource consumption over time. Just to see how it develop. As well have a look to your host system. Just to exclude issues there. Both @StephanStS as well as @HolgerTB running quite some Proxmox systems.

The only difference I see to my DietPi machines is, that I set the options to

I am using the latest updates of Proxmox 7.3.-4

Yes, I also use this version of ProxMox.

The funny thing is that all LXC containers and the other VMs all run. That’s why I excluded ProxMox from my troubleshooting.

The stupid thing about the current problem is that I have no way of logging it.

Then I would assume that one of the Docker container in the VM is causing the problem and I would repeat Joulinars and Michas proposal of executing free -m e.g. every hour for 1 day and look whether the free memory decreases.

If yes, do the same with htop looking for the process “eating” the memory.

That is, what they meant with observing the memory consumption over time.

You also could test it that you only run one docker container to find out which of the docker container makes the trouble.

theoretically you could setup an additional VM hosting a monitoring stack. This way you could watch resource consumption automatically.

Btw did you create persistent system logs already? If not, you could try following:

dietpi-software uninstall 103 # uninstalls DIetPi-RAMlog

mkdir /var/log/journal # triggers systemd-journald logs to disk

reboot # required to finalise the RAMlog uninstall

Then you can check system logs via:

journalctl

which will then show as well logs from previous boot sessions. To limit the size, you can additionally e.g. apply the following:

mkdir -p /etc/systemd/journald.conf.d

cat << '_EOF_' > /etc/systemd/journald.conf.d/99-custom.conf

[Journal]

SystemMaxFiles=2

MaxFileSec=7day

_EOF_

This will limit logs to 14 days split across two journal files, so that with rotation you will always have between 7 and 14 days of logs available.

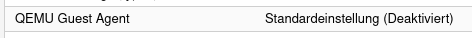

I See you activated the Emu Guest tools. Did you install them correct?

Do you use memory ballooning? The tools must be installed correct if you enable ballooning

Here is a hint to install the qemu tools correctly.

2 Likes