I’ve got DietPi installed on a few Pine64 devices I’ve got, mainly a RockPro64 and a SOQuartz. I’ve been trying to figure out how to set up OpenCL to use the Mali GPUs on these devices and perhaps the NPU on the SOQuartz as well, but I’ve not found any clear instructions on how to do any of these things. I hear a lot about the Mali Midgard/Panfrost stuff but I’ve not found a clear document that describes how to even begin to set those things up, as evidently they aren’t installed on DietPi by default and there doesn’t seem to be a package in the repos that has these, or I haven’t found out what that is. Any hints on how to get OpenCL to use the Mali drivers?

Hello dido,

you can go two possible routes:

The proprietary ARM OpenCL blobs, or the open-source path via Panfrost + Mesa OpenCL.

Here’s a comparison table AI made for me:

| Approach | OpenCL version actually available | Kernel dependency | Licensing | Recommended for |

|---|---|---|---|---|

| ARM proprietary blob | 2.0 Full Profile | Rockchip BSP | Closed | Maximum compatibility with vendor SDKs |

| Mesa (Panfrost + RustiCL) | 1.2 / 3.0 subset (upstream improving) | Mainline 6.x+ | Open | Long-term maintainable, open-source systems |

edit bc false info:

The thing is, the Rockchip kernel is on 4.x and there’s an experimentel kernel on 5.x

I wonder what @MichaIng has to say about this ![]()

Rockchip mainline kernel is on 6.12 (LTS) and the vendor kernel on 6.1 ![]() . In case of Quartz64 where we compile own Linux builds it is latest Linux 6.17.

. In case of Quartz64 where we compile own Linux builds it is latest Linux 6.17.

The Rockchip vendor kernel is used by default on RK3588 boards only, i.e. none of them you listed.

Mainline kernel ships with open source drivers, like Lima and Panfrost from Mesa project, Panthor graphics for RK3588, and Hantro VPU. These should all work with the Mesa userspace libraries. DietPi images do not have graphics libraries pre-installed, but they are installed with desktops or other X and graphics applications. See available packages here to install via apt: Debian -- Details of source package mesa in trixie

For OpenCL in particular there is the mesa-opencl-icd package. But the Panfrost driver states that OpenCL is not supported yet: Panfrost — The Mesa 3D Graphics Library latest documentation

But some work was done with Mesa 25.1:

But it does not sound like OpenCL API was fully present. You could actually test with Mesa 25.2 provided by Trixie backports:

apt install -t trixie-backports mesa-opencl-icd

That should pull/upgrade all other Mesa libraries to 25.2 as well.

In case of the Quartz64, our kernel config might be missing some features … though Panfrost and Hantro are included: DietPi/.build/images/Quartz64/quartz64_defconfig at 0f11d71b8eece52abe5c37e4c03118ab72a12cd9 · MichaIng/DietPi · GitHub

If any driver or feature appears to be missing, ping me, we can add them quickly.

Regarding the NPU, so far only the vendor kernel contained the driver for RK35xx SBCs. But Linux 6.18 seems to implement it, at least for RK3588, not sure about RK356x (Quartz64): mainline-status.md · main · hardware-enablement / Rockchip upstream enablement efforts / Notes for Rockchip 3588 · GitLab

… yeah this one: Making sure you're not a bot!

Bloody new in Linux 6.18. I could create a test build based on Linux 6.18-rc2 with this enabled. Armbian moved their edge kernel to 6.18 RC as well, so for any other RK35xx SBC (all but Quartz64) I’d just need to trigger a new build, to test.

Well, I did try doing apt install -t trixie-backports mesa-opencl-icd on the SOQuartz but clinfo still seems to show that it’s got nothing:

root@soquartz:~# clinfo

Number of platforms 1

Platform Name rusticl

Platform Vendor Mesa/X.org

Platform Version OpenCL 3.0

Platform Profile FULL_PROFILE

Platform Extensions cl_khr_icd

Platform Extensions with Version cl_khr_icd 0x800000 (2.0.0)

Platform Numeric Version 0xc00000 (3.0.0)

Platform Extensions function suffix MESA

Platform Host timer resolution 1ns

Platform Name rusticl

Number of devices 0

NULL platform behavior

clGetPlatformInfo(NULL, CL_PLATFORM_NAME, ...) rusticl

clGetDeviceIDs(NULL, CL_DEVICE_TYPE_ALL, ...) No devices found in platform [rusticl?]

clCreateContext(NULL, ...) [default] No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_DEFAULT) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_GPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ACCELERATOR) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CUSTOM) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ALL) No devices found in platform

ICD loader properties

ICD loader Name OpenCL ICD Loader

ICD loader Vendor OCL Icd free software

ICD loader Version 2.3.3

ICD loader Profile OpenCL 3.0

Anything else I can try to do?

Have you properly set up your environment (export RUSTICL_ENABLE=panfrost)?

I just read a bit, and OpenCL C is the programming language to build binaries to run on OpenCL devices. A necessity for full OpenCL support, but does not mean the OpenCL driver stack is there. So I guess it remains true that Panfrost has no OpenCL support yet. But at least work about it is done.

OpenCL C btw has been implemented for Bifrost and Valhall, i.e. as well for the Mali G52 of the RK3566 => SOQuartz.

So I guess for now, Rochchip’s libmali would be needed, and maybe also their vendor kernel.

What am I missing?

clinfo-fp-odroid-m1.log (16.3 KB)

********************************************************************************

ssd-006

Hardkernel ODROID-M1

CPU 0-3: schedutil 408 MHz - 1992 MHz

GPU: simple_ondemand 200 MHz - 800 MHz

6.16.0-0.rc1.17.fc43.aarch64 #1 SMP PREEMPT_DYNAMIC Sat Jun 14 11:19:02 CEST 2025

********************************************************************************

Number of platforms 1

Platform Name rusticl

Platform Vendor Mesa/X.org

Platform Version OpenCL 3.0

Platform Profile FULL_PROFILE

Platform Extensions cl_khr_icd cl_khr_byte_addressable_store cl_khr_create_command_queue cl_khr_expect_assume cl_khr_extended_versioning cl_khr_global_int32_base_atomics cl_khr_global_int32_extended_atomics cl_khr_il_program cl_khr_local_int32_base_atomics cl_khr_local_int32_extended_atomics cl_khr_integer_dot_product cl_khr_spirv_no_integer_wrap_decoration cl_khr_suggested_local_work_size cl_khr_spirv_linkonce_odr cl_khr_fp16 cl_khr_3d_image_writes cl_khr_depth_images

Platform Extensions with Version cl_khr_icd 0x400000 (1.0.0)

cl_khr_byte_addressable_store 0x400000 (1.0.0)

cl_khr_create_command_queue 0x400000 (1.0.0)

cl_khr_expect_assume 0x400000 (1.0.0)

cl_khr_extended_versioning 0x400000 (1.0.0)

cl_khr_global_int32_base_atomics 0x400000 (1.0.0)

cl_khr_global_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_il_program 0x400000 (1.0.0)

cl_khr_local_int32_base_atomics 0x400000 (1.0.0)

cl_khr_local_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_integer_dot_product 0x800000 (2.0.0)

cl_khr_spirv_no_integer_wrap_decoration 0x400000 (1.0.0)

cl_khr_suggested_local_work_size 0x400000 (1.0.0)

cl_khr_spirv_linkonce_odr 0x400000 (1.0.0)

cl_khr_fp16 0x400000 (1.0.0)

cl_khr_3d_image_writes 0x400000 (1.0.0)

cl_khr_depth_images 0x400000 (1.0.0)

Platform Numeric Version 0xc00000 (3.0.0)

Platform Extensions function suffix MESA

Platform Host timer resolution 1ns

Platform Name rusticl

Number of devices 1

Device Name Mali-G52 r1 (Panfrost)

Device Vendor Arm

Device Vendor ID 0

Device Version OpenCL 3.0

Device Numeric Version 0xc00000 (3.0.0)

Driver Version 25.1.3

Device OpenCL C Version OpenCL C 1.2

Device OpenCL C Numeric Version 0x402000 (1.2.0)

Device OpenCL C all versions OpenCL C 0xc00000 (3.0.0)

OpenCL C 0x402000 (1.2.0)

OpenCL C 0x401000 (1.1.0)

OpenCL C 0x400000 (1.0.0)

Device OpenCL C features __opencl_c_integer_dot_product_input_4x8bit 0x800000 (2.0.0)

__opencl_c_integer_dot_product_input_4x8bit_packed 0x800000 (2.0.0)

__opencl_c_int64 0x400000 (1.0.0)

__opencl_c_images 0x400000 (1.0.0)

__opencl_c_read_write_images 0x400000 (1.0.0)

__opencl_c_3d_image_writes 0x400000 (1.0.0)

Latest conformance test passed v0000-01-01-00

Device Type GPU

Device Profile FULL_PROFILE

Device Available Yes

Compiler Available Yes

Linker Available Yes

Max compute units 1

Max clock frequency 800MHz

Device Partition (core)

Max number of sub-devices 0

Supported partition types None

Supported affinity domains (n/a)

Max work item dimensions 3

Max work item sizes 256x256x256

Max work group size 256

Preferred work group size multiple (device) 8

Preferred work group size multiple (kernel) 8

Max sub-groups per work group 0

Preferred / native vector sizes

char 1 / 1

short 1 / 1

int 1 / 1

long 1 / 1

half 1 / 1 (cl_khr_fp16)

float 1 / 1

double 0 / 0 (n/a)

Half-precision Floating-point support (cl_khr_fp16)

Denormals No

Infinity and NANs Yes

Round to nearest Yes

Round to zero No

Round to infinity No

IEEE754-2008 fused multiply-add No

Support is emulated in software No

Single-precision Floating-point support (core)

Denormals No

Infinity and NANs Yes

Round to nearest Yes

Round to zero No

Round to infinity No

IEEE754-2008 fused multiply-add No

Support is emulated in software No

Correctly-rounded divide and sqrt operations No

Double-precision Floating-point support (n/a)

Address bits 64, Little-Endian

Global memory size 4261412864 (3.969GiB)

Error Correction support No

Max memory allocation 2147483647 (2GiB)

Unified memory for Host and Device Yes

Shared Virtual Memory (SVM) capabilities (core)

Coarse-grained buffer sharing No

Fine-grained buffer sharing No

Fine-grained system sharing No

Atomics No

Minimum alignment for any data type 128 bytes

Alignment of base address 4096 bits (512 bytes)

Preferred alignment for atomics

SVM 0 bytes

Global 0 bytes

Local 0 bytes

Atomic memory capabilities relaxed, work-group scope

Atomic fence capabilities relaxed, acquire/release, work-group scope

Max size for global variable 0

Preferred total size of global vars 0

Global Memory cache type None

Image support Yes

Max number of samplers per kernel 32

Max size for 1D images from buffer 65536 pixels

Max 1D or 2D image array size 2048 images

Base address alignment for 2D image buffers 0 bytes

Pitch alignment for 2D image buffers 0 pixels

Max 2D image size 32768x32768 pixels

Max 3D image size 32768x32768x32768 pixels

Max number of read image args 128

Max number of write image args 64

Max number of read/write image args 64

Pipe support No

Max number of pipe args 0

Max active pipe reservations 0

Max pipe packet size 0

Local memory type Global

Local memory size 32768 (32KiB)

Max number of constant args 16

Max constant buffer size 67108864 (64MiB)

Generic address space support No

Max size of kernel argument 4096 (4KiB)

Queue properties (on host)

Out-of-order execution Yes

Profiling Yes

Device enqueue capabilities (n/a)

Queue properties (on device)

Out-of-order execution No

Profiling No

Preferred size 0

Max size 0

Max queues on device 0

Max events on device 0

Prefer user sync for interop Yes

Profiling timer resolution 41ns

Execution capabilities

Run OpenCL kernels Yes

Run native kernels No

Non-uniform work-groups No

Work-group collective functions No

Sub-group independent forward progress No

IL version SPIR-V_1.0 SPIR-V_1.1 SPIR-V_1.2 SPIR-V_1.3 SPIR-V_1.4 SPIR-V_1.5 SPIR-V_1.6

ILs with version SPIR-V 0x400000 (1.0.0)

SPIR-V 0x401000 (1.1.0)

SPIR-V 0x402000 (1.2.0)

SPIR-V 0x403000 (1.3.0)

SPIR-V 0x404000 (1.4.0)

SPIR-V 0x405000 (1.5.0)

SPIR-V 0x406000 (1.6.0)

printf() buffer size 1048576 (1024KiB)

Built-in kernels (n/a)

Built-in kernels with version (n/a)

Device Extensions cl_khr_byte_addressable_store cl_khr_create_command_queue cl_khr_expect_assume cl_khr_extended_versioning cl_khr_global_int32_base_atomics cl_khr_global_int32_extended_atomics cl_khr_il_program cl_khr_local_int32_base_atomics cl_khr_local_int32_extended_atomics cl_khr_integer_dot_product cl_khr_spirv_no_integer_wrap_decoration cl_khr_suggested_local_work_size cl_khr_spirv_linkonce_odr cl_khr_fp16 cl_khr_3d_image_writes cl_khr_depth_images

Device Extensions with Version cl_khr_byte_addressable_store 0x400000 (1.0.0)

cl_khr_create_command_queue 0x400000 (1.0.0)

cl_khr_expect_assume 0x400000 (1.0.0)

cl_khr_extended_versioning 0x400000 (1.0.0)

cl_khr_global_int32_base_atomics 0x400000 (1.0.0)

cl_khr_global_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_il_program 0x400000 (1.0.0)

cl_khr_local_int32_base_atomics 0x400000 (1.0.0)

cl_khr_local_int32_extended_atomics 0x400000 (1.0.0)

cl_khr_integer_dot_product 0x800000 (2.0.0)

cl_khr_spirv_no_integer_wrap_decoration 0x400000 (1.0.0)

cl_khr_suggested_local_work_size 0x400000 (1.0.0)

cl_khr_spirv_linkonce_odr 0x400000 (1.0.0)

cl_khr_fp16 0x400000 (1.0.0)

cl_khr_3d_image_writes 0x400000 (1.0.0)

cl_khr_depth_images 0x400000 (1.0.0)

NULL platform behavior

clGetPlatformInfo(NULL, CL_PLATFORM_NAME, ...) rusticl

clGetDeviceIDs(NULL, CL_DEVICE_TYPE_ALL, ...) Success [MESA]

clCreateContext(NULL, ...) [default] Success [MESA]

clCreateContextFromType(NULL, CL_DEVICE_TYPE_DEFAULT) Success (1)

Platform Name rusticl

Device Name Mali-G52 r1 (Panfrost)

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_GPU) Success (1)

Platform Name rusticl

Device Name Mali-G52 r1 (Panfrost)

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ACCELERATOR) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CUSTOM) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ALL) Success (1)

Platform Name rusticl

Device Name Mali-G52 r1 (Panfrost)

ICD loader properties

ICD loader Name OpenCL ICD Loader

ICD loader Vendor OCL Icd free software

ICD loader Version 2.3.3

ICD loader Profile OpenCL 3.0

This is plain Debian or DietPi without any additional library or driver blob? And where did you get Mesa 25.1.3 from (since Debian ships 25.0 on Trixie and 25.2 via backports)?

So Mesa website does false statement? ![]()

I mean great if it works, just confused, since all available info state it should not.

25.1.3 was the mainline release version at the time of log was taken, currently I am running 25.2.4.

It is not my fault that Debian, under the pretext of stability, only offers outdated versions.

I follow current mainline releases because that’s the only way to enjoy the latest development stages.

Lock at this:

[ 6.194830] [drm] Initialized rocket 0.0.0 for rknn on minor 0

[ 6.201215] rocket fdab0000.npu: Rockchip NPU core 0 version: 1179210309

[ 6.224973] rocket fdac0000.npu: Rockchip NPU core 1 version: 1179210309

[ 6.234810] rocket fdad0000.npu: Rockchip NPU core 2 version: 1179210309

The rocket has been lunched, guess time to rebuild mesa with the rocket driver enabled.

Mesamatrix reflects the current implementation status.

I.e. you compiled it yourself?

Trixie backports provides v25.2.4 as well. Luckily, when on latest stable Debian, backports provide latest Mesa quite quickly, i.e. no need to compile manually ![]() .

.

Linux 6.18-rc2? Mesa userland libs do not need to be rebuilt with Rocket support somehow, but only the kernel with Rocket (and Panfrost of course) enabled, right?

Normally, my development team takes care of this for me, only if I want to try

out a feature that is not yet available in the current mainline releases, I

have to handle it myself.

In this case, it is this patch:

--- mesa.spec.orig<---->2025-10-26 16:31:28.683055884 +0100

+++ mesa.spec<->2025-10-26 16:34:07.671550876 +0100

@@ -410,7 +410,7 @@ rewrite_wrap_file rustc-hash

%meson \

-Dplatforms=x11,wayland \

%if 0%{?with_hardware}

- -Dgallium-drivers=llvmpipe,virgl,nouveau%{?with_r300:,r300}%{?with_crocus:,crocus}%{?with_i915:,i915}%{?with_iris:,iris}%{?with_vmware:,svga}%{?with_radeonsi:,radeonsi}%{?with_r600:,r600}%{?with_asahi:,asahi}%{?with_freedreno:,freedreno}%{?with_etnaviv:,etnaviv}%{?with_tegra:,tegra}%{?with_vc4:,vc4}%{?with_v3d:,v3d}%{?with_lima:,lima}%{?with_panfrost:,panfrost}%{?with_vulkan_hw:,zink}%{?with_d3d12:,d3d12} \

+ -Dgallium-drivers=llvmpipe,virgl,nouveau%{?with_r300:,r300}%{?with_crocus:,crocus}%{?with_i915:,i915}%{?with_iris:,iris}%{?with_vmware:,svga}%{?with_radeonsi:,radeonsi}%{?with_r600:,r600}%{?with_asahi:,asahi}%{?with_freedreno:,freedreno}%{?with_etnaviv:,etnaviv}%{?with_tegra:,tegra}%{?with_vc4:,vc4}%{?with_v3d:,v3d}%{?with_lima:,lima}%{?with_panfrost:,panfrost}%{?with_vulkan_hw:,zink}%{?with_d3d12:,d3d12},rocket \

%else

-Dgallium-drivers=llvmpipe,virgl \

%endif

and triggering the build.

But only as far as all necessary build options for a desired feature are preconfigured.

For Mesa, see above, kernel support is available out-of-the-box as long as the necessary build options are set, what might be missing is the appropriate wiring in the device tree for a specific device. But nothing that can’t be made up for with an DT overlay.

Okay, need to keep an eye on this then. Probably the rocket driver is added to Mesa build options once Linux 6.18 is stable. Else I’ll open an MR for a patch with Debian, and in case one with their Linux build config to add the rocket module. However, unrelated to OpenCL.

ust for posterity, I’ve been playing around a bit with AI inference in the meantime.

With this script, I generated a container and delegated my first inference to the NPU:

#!/bin/bash

IMAGE="grace_hopper.bmp"

MODEL="mobilenet_v1_1_224_quant"

WORKBENCH="."

ENVIRONMENT="${WORKBENCH}/python/3.13"

[ "${1}" == "setup" ] || [ ! -f ${ENVIRONMENT}/bin/activate ] && BOOTSTRAP="true"

[ -v BOOTSTRAP ] && python3.13 -m venv ${ENVIRONMENT}

source ${ENVIRONMENT}/bin/activate

[ -v BOOTSTRAP ] && pip install pillow

[ -v BOOTSTRAP ] && pip install ai-edge-litert-nightly

TEFLON_DEBUG=verbose ETNA_MESA_DEBUG=ml_dbgs python ${WORKBENCH}/classification-litert.py \

-i ${WORKBENCH}/example/${IMAGE} \

-m ${WORKBENCH}/model/${MODEL}.tflite \

-l ${WORKBENCH}/model/${MODEL}.labels \

-e /usr/lib64/libteflon.so

See here classification-3.13-litert.log (12.9 KB) as proof.

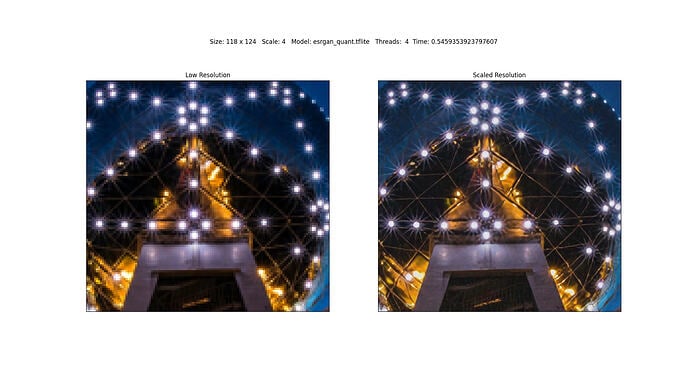

At the moment, I’m experimenting with super resolution and find the results quite impressive.

are the real resolutions.

The inference is still done with the CPU here because I still need to learn how to generate a super-resolution-model.tflite that can be delegated to the Rockchip NPU. But the execution time is already not bad as it is.

On the other hand, my infrastructure is already prepared, I ‘just’ need to replace the model.tflite, because I already know from the classification experiment how to use the external teflon delegate.

Oh, by the way, the Etnaviv NPU works with it as well, without modifications on the use.

What I was wondering: Is the API between vendor RKNPU and mainline Rocket NPU consistent? Both provide a /dev/dri/render* node, but no idea whether this is what AI/ML libraries usually use, and whether the nodes behave the same?

How did you come to this realization?

Compute Accelerators

A queation, no realization. What I try to find out is whether software/libraries which did work with vendor RKNPU will work with mainline Rocket NPU the same way, or whether switching from vendor Linux 6.1 to mainline Linux 6.18 with Rocket NPU enabled will be a breaking change in any case for AI/ML users.

No more or less than GPU, VPU, … as well.

That’s simply the advantage of out of tree, vendor-invented interfaces that are not implemented in a mainline-compliant way.

They do create user bindings for a specific manufacturer, but they are rarely adopted by other device vendors because they are usually not portable enough.

But that’s not a problem thanks to the provider’s well-supported quality software stack, because it leaves no wishes unfulfilled and there is no need for further mainline developments.

Seems like the mainline NPU interface exists since a while, so that libraries should support it and then hopefully the Rocket NPU just well.

I was just afraid it might be similar to RKMPP vs mainline Hantro VPU driver, where e.g. Jellyfin supports the former, but not the latter for RK3588.