Creating a bug report/issue

Required Information

- DietPi version | latest

- Distro version | bullseye

- Kernel version | Linux DietPi 6.0.13-meson64 #22.11.2 SMP PREEMPT Sun Dec 18 16:52:19 CET 2022 aarch64 GNU/Linux

- SBC model | Odroid HC4

Hi guys, I will try to explain the best I can, what has happened and what I try to do without success.

Until two weeks ago, I had on my Odroid HC4 two drives installed, one HDD(sdb1)(seagate barracuda) and one SSD(sda1), I had on the ssd a swap partition and user data (not rootfs) and on the HDD just downloaded data from transmission, so on this drive I had setup idle spindown on dietpi-drive_manager for 10 minutes that even this drive doesn’t support APM, the drive went to standby perfectly.

When I received the new HDD (seagate ironwolf)(sda1) I replace the SSD with the HDD after moving user data back to the microSD and deleting swap partition. Then I went to setup idle spindown, and it didn’t work, neither of both drives goes to standby, (the new one also doesn’t support APM).

I start looking online solutions.

I found this post https://andrejacobs.org/linux/spinning-down-seagate-hard-drives-in-ubuntu-20-04/ and

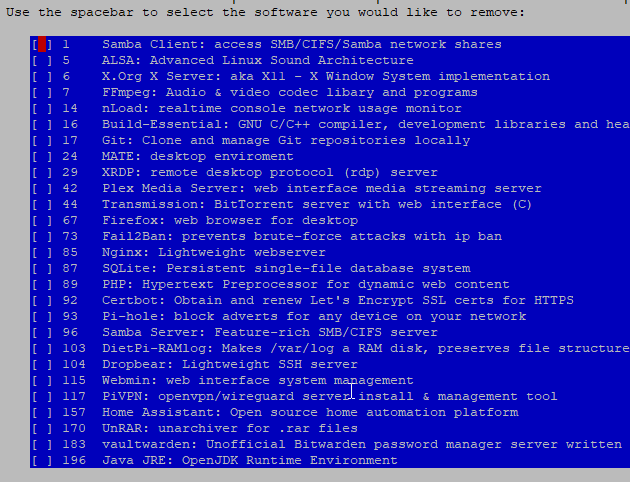

I started installing Openseachest tools in order to set idle spindown to the new drive, before anything, I disabled hdparm on dietpi-drive_manager.

I installed Openseachest toolslike the post, and I checked that the drive didn’t have setup idle times, so I set it, and now it goes standby. I then set the old one, but nothing it doesn’t got to standby or if it goes, something wakes up.

Then I installed hd-idle, to try another way to set the old drive, and again anything.. If it goes to sleep something wakes up..

I again search something that can be waking the drive up, and I found this https://serverfault.com/a/834902. I installed auditd and did the same commands as it said, and the result is like hdparm is the process it wakes the drive.

I don’t know what else to do, or if you know another tool to find what wakes the drive, or which process are using the drive in real time.

It’s driving me nuts, because I have the board in the living room, and hearing all the time the drive going to standby and waking up is a bit annoying.

Thank you so much as always.